|

3/2/2024 0 Comments Text to speech whisper mac

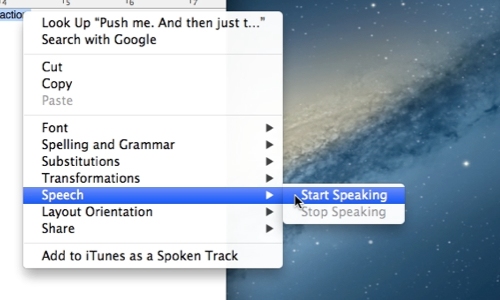

Then the RX 6950 XT comes in just ahead of the RTX 3070 - definitely not the expected result. AMD's RX 7900 XTX and 7900 XT come next, followed by the RTX 4070 Ti and 4070, then the RTX 30. Nvidia's RTX 4090 and RTX 4080 take the top two spots, with just over/under 3,000 words per minute transcribed. We're probably getting close to CPU limits as well, or at least the scaling doesn't exactly match up with what we'd expect. There are a few cases where it got the transcription "right" while the large model was incorrect, but there were far more cases where the reverse was true. Our base performance testing uses the medium model, which tends to be a bit less accurate overall. The GPUs generally don't end up anywhere near 100% load during the workload, so power ends up being quite a bit below the GPUs' rated TGPs. We start logging power use right before starting the transcription, and stop it right after the transcription is finished. We also collected data on GPU power use while running the transcription, using an Nvidia PCAT v2 device. Then we converted the resulting time into words per minute - the medium.en model transcribed 1,570 words while the large model resulted in 1,580 words. We tested each card with multiple runs, discarding the first and using the highest of the remaining three runs. It's a 13 minute video, which is long enough to give the faster GPUs a chance to flex their muscle.Īs noted above, we've run two different versions of the Whisper models, medium.en and large. We did run a few tests on a slightly slower Core i9-12900K and found performance was only slightly lower, at least for WhisperDesktop, but we're not sure how far down the CPU stack you can go before it will really start to affect performance.įor our test input audio, we've grabbed an MP3 from this Asus RTX 4090 Unboxing that we posted last year. Our test PC is our standard GPU testing system, which comes with basically the highest possible performance parts (within reason). You'll need the GGML versions - we used ggml-medium.en.bin (1.42GiB) and ggml-large.bin (2.88GiB) for our testing. Besides the EXE and DLL, you'll need one or more of the OpenAI models, which you can grab via the links from the application window. (I was, though you can also try to compile the code yourself if you want.) Just grab WhisperDesktop.zip and extract it somewhere. Getting WhisperDesktop running proved very easy, assuming you're willing to download and run someone's unsigned executable. It also means that it's not using special hardware like Nvidia's Tensor cores or Intel's XMX cores. That uses DirectCompute rather than PyTorch, which means it will run on any DirectX 11 compatible GPU - yes, including things like Intel integrated graphics. There's also this Const-Me project, WhisperDesktop, which is a Windows executable written in C++. Of course there's the OpenAI GitHub (instructions and details below). There are a few options for running Whisper, on Windows or otherwise. We wanted to let the various GPUs stretch their legs a bit and show just how fast they can go.

Real-time speech recognition only needs to keep up with maybe 100–150 words per minute (maybe a bit more if someone is a fast talker). We did not attempt to use it in that fashion, as we were more interesting in checking performance. Note also that Whisper can be used in real-time to do speech recognition, similar to what you can get through Windows or Dragon NaturallySpeaking.

You can also run it on your CPU, though the speed drops precipitously. The last one is our subject today, and it can provide substantially faster than real-time transcription of audio via your GPU, with the entire process running locally for free. Besides ChatGPT, Bard, and Bing Chat (aka Sydney), which all run on data center hardware, you can run your own local version of Stable Diffusion, Text Generation, and various other tools. The best graphics cards aren't just for gaming, especially not when AI-based algorithms are all the rage.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed